Coding in VR

· 661 words · 4 minutes read

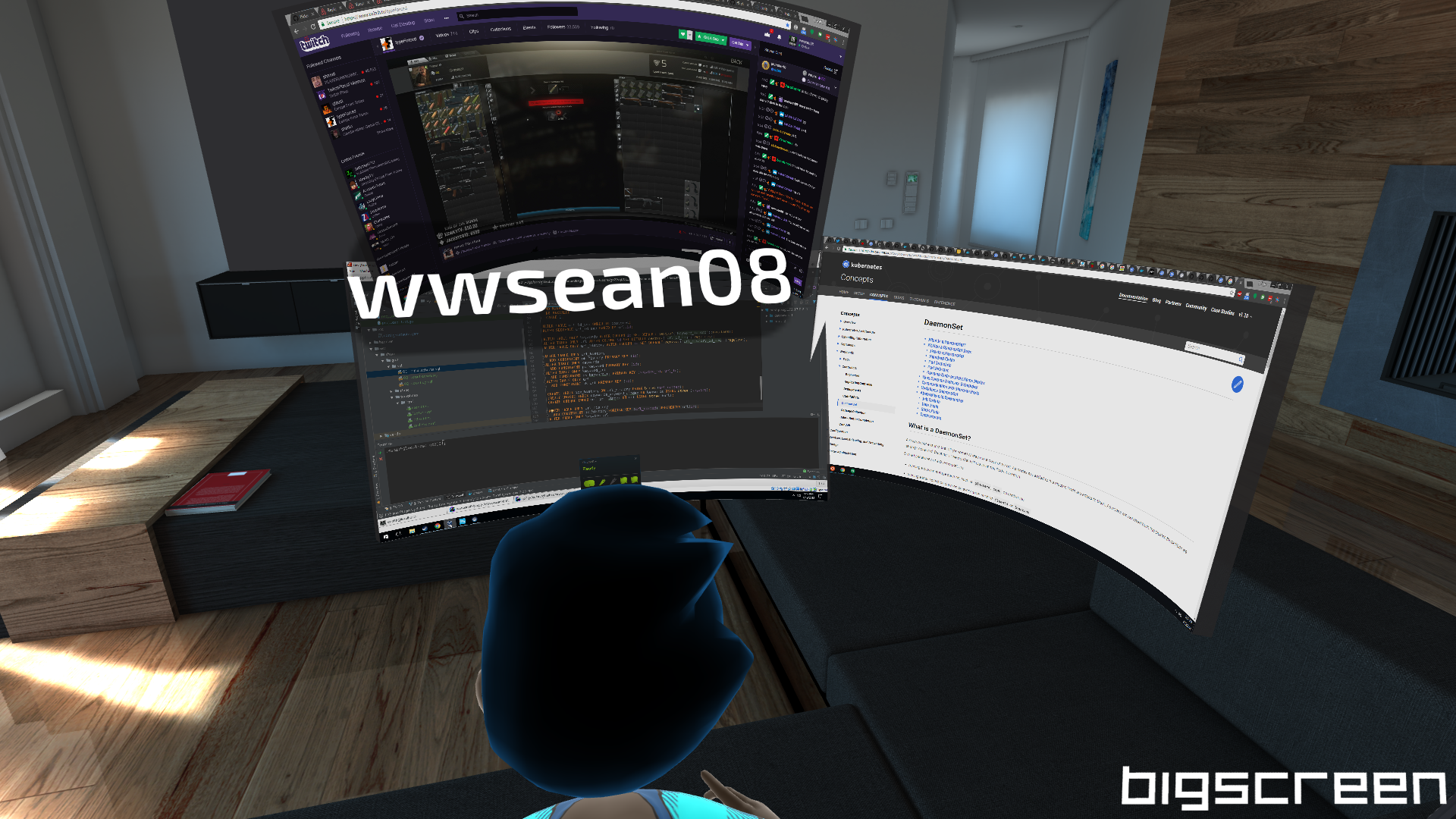

One of my dream setups since the HTC Vive was announced in 2015 was a true VR desktop experience where I could do my programming. The idea would be that i could have windows free floating as i needed surrounding me. I know this may not be the best environment but it was my dream so I decided to test it out as best I could once I got the HTC Vive Pro.

Setup

Currently for the Vive there is no desktop experience that truly fits what I want, that is each window is independent. The closest is Bigscreen Beta which is free, however it breaks up the screens in your environment based on the number of displays you have connected to your computer, so if you only have 1 display you only have one window to work with in the world. I have 3 so it’s bearable though not perfect by any means, hopefully soon they’ll allow you to break your application windows out of the display windows, though I have not talked to them about it and do understand it’s a startup and this may not even be on their roadmap.

Cons

Let’s start off with the cons because the downsides are pretty bad for some people. This first one should be obvious, but you’re isolated, you can’t see the world around you, so you can’t see your keyboard and mouse, this isn’t too big of an issue for me, though if you can’t type without looking then this will not work for you. Even if you can type without looking it still may be difficult, you don’t really realize how much you look at the keyboard out of the corner of your eyes while typing sometimes. Also because of this isolation, any glasses, mugs, papers, or anything else on your desk is liable to get knocked over on accident so be careful and expect accidents to happen.

The screen door effect is still there, it’s not as bad as with the original Vive but it does exist, so while the text is more readable in and even out of the sweet spot, you can still see individual pixels if you look for them. Unfortunately I don’t have quantifiable numbers just my perception.

The Vive Pro while lighter than the original vive, and better balanced, it isn’t weightless. For long term usage it’s not quite as easy to use as regular monitors since you don’t have the extra weight on your head.

Pros

You can eliminate distractions, no need to worry about whats going on in the world around you. Don’t like the lighting in your environment, change what “room” you’re in, or go into the void where it’s all black except for your screens and the hands that Bigscreen shows for controlling your windows.

You can also invite others to join you and have a pair programming experience using Bigscreen, whether you know the people or not. You could also do this via streaming your work on twitch, youtube, or another streaming service, but it’s yet another option.

Conclusion

While I wouldn’t consider programming in VR an everyday thing, especially with 2 cats that sometimes want to sit on the keyboard and mouse in front of me and love to steal attention and affection, it could be useful for people trying to block everything out and focus on their work without distractions. I’d also like to say that without DisplayFusion allowing my mouse to not get stuck between screens, giving me individual task bars for each display, and allowing my mouse to continue to go to the far edge of my monitors and loop around I probably would not have nearly as good of an experience with this experiment.

One final note, this whole blog post was written while in Bigroom using my HTC Vive Pro. Neither HTC or Bigscreen are sponsoring this post

A screenshot from my first time coding in Bigscreen VR

Ⓒ 2018 Sean Smith